When One AWS Tag Broke Our Production NLB

Last year, we started noticing a strange issue in several of our AWS Network Load Balancers.

After our regular weekend maintenance, which involved:

Elasticsearch (ES) image upgrades (StatefulSet running in EKS)

EKS node group upgrades

our Network Load Balancer (NLB) for ES suddenly stopped registering new targets.

To mitigate this, the engineers had to identify where ES pods were running, copy the instance IDs and manually add them to the target group.

At Glean, we have hundreds of AWS customer accounts, each with their own EKS cluster in a single tenant setup.

This issue appeared randomly and only during weekends, making it harder to reason about. We didn’t have enough observability for AWS Load Balancer Controller, so no historical logs to debug when the issue happened. Since the failures always followed ES upgrades, we assumed it to be an issue with it.

When I finally got a chance to triage this deeply, the root cause turned out to be something entirely different and unexpected.

Architecture Overview

ES runs as a StatefulSet with 3 pods in a dedicated node group.

It listens on two ports - 9200 (HTTP) and 9300 (TCP) and exposed via a Kubernetes Service of type LoadBalancer, which the AWS Load Balancer Controller (AWS LBC) creates as an external NLB (target type as instance).

Traffic flow

Client -> NLB -> EC2 instances (Target group) -> NodePort -> kube-proxy -> ES podsThe target type matters here because traffic is routed via EC2 instances rather than directly to pods.

In instance target mode, the NLB forwards traffic to a NodePort on worker nodes. kube-proxy updates iptables such that every node in the cluster listens on that NodePort, regardless of whether a pod is running locally.

This also means, spot nodes can receive the traffic and may die, results in connection reset.

To mitigate this, we configured:

externalTrafficPolicy: LocalThis ensures that only the nodes with ES pods will receive traffic.

Role of AWS loadbalancer Controller

AWS LBC is a controller that satisfies Kubernetes Service resources by provisioning Network Load Balancers.

It is responsible for

Creating Load Balancers

Creating Target Groups

Registering targets

Configuring health checks

Continuous reconciliation as per the K8s spec

When we checked the controller logs, we saw continuous reconciliation failures.

Error logs (simplified):

{"msg":"Requesting network requeue due to error from ReconcileForNodePortEndpoints","tgb":{"name":"k8s-elastics-xxx","namespace":"elasticsearch-1-namespace"},

{"msg":"Reconciler error", "controllerGroup":"NLBv2.k8s.aws", "controllerKind":"TargetGroupBinding","TargetGroupBinding":{"name":"k8s-elastics-xxx-1b854ad070","namespace":"elasticsearch-1-namespace"}

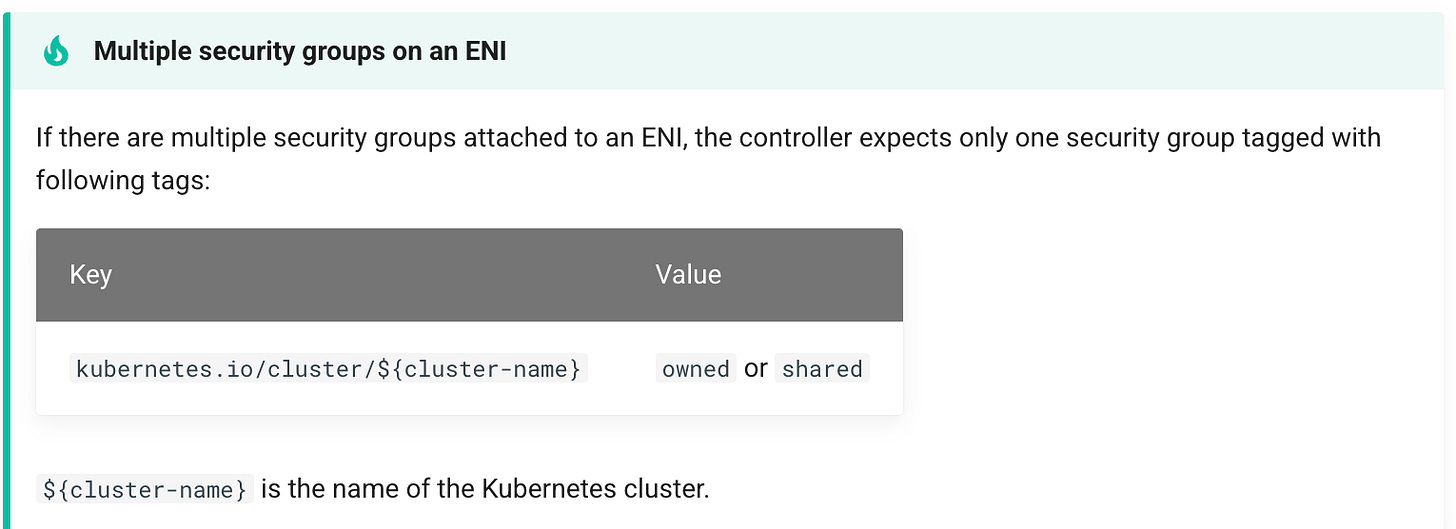

"error":"expected exactly one securityGroup tagged with kubernetes.io/cluster/cluster-name for eni eni-0bc35d6b81992f2d3, got: [sg-0f5c2897d0ec362e8 sg-0fa198e24300075e0] (clusterName: cluster-name)"}During the reconciliation process, it will query for security groups attached to the ENIs to allow traffic from LB to the nodes.

Given all the nodes retain the cluster security group, it uses kubernetes.io/cluster/clusterName: owned label to discover the SGs.

AWS-LBC-security-groups-selection

It expects only one SG to be available (one that gets created by default during cluster creation) with the label. In this case, it found two security groups and so it stopped the reconciliation. This is the actual issue that led to the failure of registering new targets.

aws ec2 describe-security-groups \

--filters "Name=tag-key,Values=kubernetes.io/cluster/cluster-name" \

--query 'SecurityGroups[].{id:GroupId,name:GroupName,tags:Tags}' \

--region us-west-1Output (simplified):

[

{

"id": "sg-0f5c2897d0ec362e8",

"name": "eks-cluster-sg-cluster-name-1347457926",

"tags": [

{"Key": "aws:eks:cluster-name", "Value": "cluster-name"},

{"Key": "kubernetes.io/cluster/cluster-name", "Value": "owned"}

]

},

{

"id": "sg-0fa198e24300075e0",

"name": "terraform-20250312123836166600000001",

"tags": [

{"Key": "kubernetes.io/cluster/cluster-name", "Value": "owned"},

{"Key": "Name", "Value": "eks-xxx-yyy"}

]

}

]

How the label got added

We had recently adopted Karpenter (couple of months ago) as the cluster autoscaler solution in AWS. It was rolled out in phases to the AWS accounts and during the rollout, we accidentally added the kubernetes.io/cluster/cluster-name: owned tag to an additional security group.

This caused the AWS LBC reconciliation failures, which is a known issue documented by Karpenter.

When launching nodes, Karpenter uses all the security groups that match the selector.

If you choose to use the kubernetes.io/cluster/$CLUSTER_NAME tag for discovery, note that this may result in failures using the AWS Load Balancer controller.

The Load Balancer controller only supports a single security group having that tag key. See this issue for more details.

Why this issue didn’t occur on the weekdays

Even though the LBC reconciliation was failing regularly, it didn’t affect the Elasticsearch during the weekdays.

This is because ES is running in a dedicated node group.

Weekdays:

No nodegroup rotation

Existing nodes and their NLB registrations remained untouched

No changes in the targets

Weekends:

Nodegroup upgrade created new nodes, removed old nodes

Old nodes in the target groups no longer exists

Reconciliation hit the security groups error and skipped registering targets

NLB ended up with no healthy targets

The Fix

We simply removed the tag from the extra security group.

Once we removed, immediately LBC started reconciling successfully. After the weekend nodegroup upgrades, new nodes were registered correctly.

Logs after fix (simplified):

"msg": "registering targets",

"arn": "arn:aws:elasticloadbalancing:us-west-1:...:targetgroup/k8s-elastics-xxx-1b854ad070/...",

"targets": [

{"Id":"i-00778c57210a62f39","Port":30301},

{"Id":"i-069e316e76e8e2074","Port":30301},

{"Id":"i-06ae7fb26e916f702","Port":30301},

{"Id":"i-06f7a589b03ae78c1","Port":30301}

]

...

"msg": "Successful reconcile"

Longer term improvements

We also made longer‑term improvements so that this class of issue is less likely to occur again.

Switch Elasticsearch NLB target type to IP

For ES, We’ve changed the target type as IP from instance

service.beta.kubernetes.io/aws-load-balancer-nlb-target-type: ipLBC now registers pod IPs as NLB targets for ES. All other services were using this already.

The path becomes

Client -> NLB -> PodIP:ContainerIt no longer needs to treat every node as a backend via NodePort, so the particular “pick a cluster SG from ENI SGs” path is not exercised for the NLB.

This also removes one redundant network hop from the path.

Terraform validations on security group tags

We added Terraform tests/validations to ensure:Only the EKS cluster security group can carry the

kubernetes.io/cluster/<cluster-name>tag.Any attempt to add that tag to additional SGs fails validation.

Improving Observability of AWS LBC

After this incident, we also improved the observability of AWS LBC to detect similar issues quickly.

Critical alerts

Reconciliation failures (error %)

Metric:

controller_runtime_reconcile_errors_total(rate, filtered by controller).Signal: sustained increase in error rate for targetGroupBinding / Service reconciliation.

Impact: load balancers, target groups, and targets may not be created/updated/deleted as desired.

Workqueue depth

Metric:

workqueue_depth(per controller).Signal: queue length growing and not draining.

Impact: delayed provisioning and updates of NLB/ALB resources.

Supporting indicators

P95 reconcile latency

Metric:

controller_runtime_reconcile_time_seconds_bucket.Signal: shows how long it takes to process 95% of reconciliation requests. High latency can indicate AWS API throttling, permission issues, or controller resource constraints.

Impact: slower provisioning and updates of AWS resources.

AWS API errors

Metric:

aws_api_requests_total{job="aws-load-balancer-controller",status!~"2.."}.Signal: Indicates failures when communicating with AWS services.

Non‑2xx responses from AWS APIs (ELBv2, EC2, etc.) directly affect LBC’s ability to reconcile state.

Lessons learned and key takeaways

Tag hygiene matters - We should enforce validations on critical tags used by the controllers.

Observability for Control plane - Control plane metrics are equally (if not more) important than application metrics. We should identify critical controller workflows and define SLOs and alerts around them.

Always validate assumptions - Correlation vs causation is real. In our case, the ES upgrade was a red herring that delayed root cause identification.

Create reproducers - reproducer setups help uncover blind spots in observability and significantly speed up debugging.